Has it been noted that McGee conditionals seem to clash with the Simplification of Disjunctive Antecedents (SDA)?

Consider the following conditional, inspired by McGee (1985).

(1) If a Republican had won then if it hadn't been Reagan then it would have been Andersen.

For context, imagine a scenario in which there were exactly two Republican candidates for the office in question, called Reagan and Andersen. Neither won. In this kind of context, (1) seems fine. So does (2).

(2) If Reagan or Andersen had won then if Reagan hadn't won then Andersen would have won.

Now, SDA (in its strong form) is the hypothesis that a conditional of the form 'if A or B then C' is equivalent to the conjunction of 'if A then C' and 'if B then C'. Applying this to (2), we would predict that (2) is equivalent to the conjunction of (3) and (4).

It is well-known that disjunctive possibility and necessity statements appear to imply the possibility of the disjuncts:

(FC) \( \Diamond(p \lor q) \Rightarrow \Diamond p \land \Diamond q \).

(RP) \( \Box(p \lor q) \Rightarrow \Diamond p \land \Diamond q \).

The first kind of inference is known as a "free choice" inference, the second is "Ross's Paradox".

For example, (1a) seems to imply (1b) and (1c):

(1a) Alice might [or: must] have gone to the party or to the concert.

(1b) Alice might have gone to the party.

(1c) Alice might have gone to the concert.

In chapter 3 of his dissertation, Booth (2022), Richard Booth points out that (FC) and (RP) underdescribe the true effect.

In around 2009, I got interested in counterpart-theoretic interpretations of modal predicate logic. Lewis's original semantics, from Lewis (1968), has some undesirable features, due to his choice of giving the box a "strong" reading (in the sense of Kripke (1971)), but it's not hard to define a better-behaved form of counterpart semantics that gives the box its more familiar "weak" reading.

Wondering if anyone had figured out the logic determined by this semantics, I found an answer in Kutz (2000) and Kracht and Kutz (2002). I also learned that counterpart semantics seems to overcome some formal limitation of the more standard "Kripke semantics". For example, while all logics between quantified S4.3 and S5 are incomplete in Kripke semantics (as shown in Ghilardi (1991)), many are apparently complete in the "functor semantics" of Ghilardi (1992), which I do not understand but which is said to have a counterpart-theoretic flavour. Skvortsov and Shehtman (1993) present a somewhat more accessible "metaframe semantics", inspired by Ghilardi's approach, and claim that the quantified version of all canonical extensions of S4 remain canonical (and hence complete) in metaframe semantics. Kracht and Kutz argue that their – much simpler – counterpart semantics inherits these properties of functor and metaframe semantics.

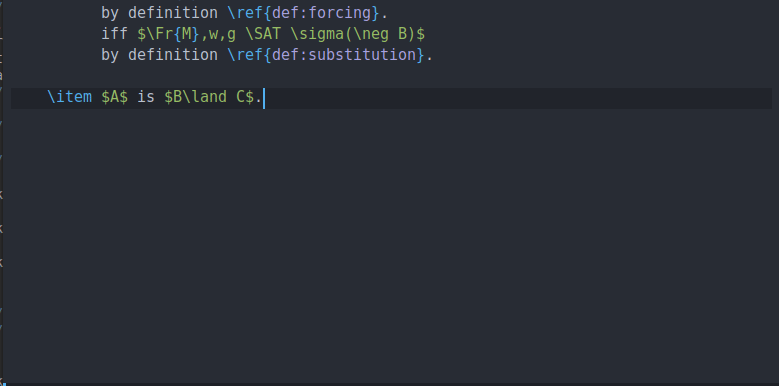

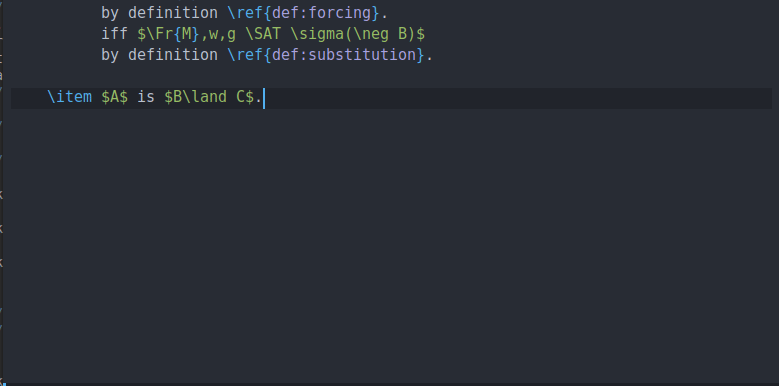

I've been using github copilot for a while now to write philosophy and logic texts. It's definitely useful for more technical writing. Here you can see how it fills in a clause in a proof by induction:

Champollion, Ciardelli, and Zhang (2016) argue that truth-conditionally equivalent sentences can make different contributions to the truth-conditions of larger sentences in which they embed. This seems obviously true. 'There are infinitely many primes' and Fermat's Last Theorem are truth-conditionally equivalent, but 'I can prove that there are infinitely many primes' is true, while 'I can prove that there are no integers a, b, c, and n > 2 for which an + bn = cn' is false. Champollion, Ciardelli, and Zhang (henceforth, CCZ) have a more interesting case in mind. They argue that substituting logically equivalent sentences in the antecedent of a subjunctive conditional can make a difference to the conditional's truth-value.

I want to say something about a passage in Christensen (2023) that echoes a longer discussion in Christensen (2007).

Here's a familiar kind of scenario from the debate about higher-order evidence.

Wilhelm (2021) and Lando (2022) argue that the Sleeping Beauty problem reveals a flaw in standard accounts of credence and chance. The alleged flaw is that these accounts can't explain how attitudes towards centred propositions are constrained by information about chance.

I assume you remember the Sleeping Beauty problem. (If not, look it up: it's fun.) Wilhelm makes the following assumptions about Beauty's beliefs on Monday morning.

First, Beauty can't be sure that it is Monday:

Decision theory textbooks often distinguish between decision-making under risk and decision-making under uncertainty or ignorance. The former is supposed to arise in situations where the agent can assign probabilities to the relevant states, the second in situations where they can't.

I've always found this puzzling. Why would a decision maker be unable to assign probabilities (even vague or indeterminate ones) to the states? I don't think there are any such situations.

I haven't looked at the history of this distinction, but I suspect it comes from von Neumann, who (I suspect) had no concept of subjective probability. If the only relevant probabilities are objective, then of course it may happen that an agent can't make their choice depend on the probability of the states because these probabilities may not be known.

Covid finally caught me, so I fell behind with everything. Let's try get back to the blogging schedule. This time, I want to recommend DiPaolo (2019). It's a great paper that emphasizes the difference between ideal ("primary") and non-ideal ("secondary") norms in epistemology.

The central idea is that epistemically fallible agents are subject to different norms than infallible agents. An ideal rational agent would, for example, never make a mistake when dividing a restaurant bill. For them, double-checking the result is a waste of time. They shouldn't do it. We non-ideal folk, by contrast, should sometimes double-check the result. As the example illustrates, the "secondary" norms for non-ideal agents aren't just softer versions of the "primary" norms for ideal agents. They can be entirely different.

I've been reading Fabrizio Cariani's The Modal Future (Cariani (2021)). It's great. I have a few comments.

This book is about the function of expressions like 'will' or 'gonna' that are typically used to talk about the future, as in (1).

(1) I will write the report.

Intuitively, (1) states that a certain kind of writing event takes place – but not right here and now. 'Will' is a displacement operator, shifting the point of evaluation. Where exactly does the writing event have to take place in order for (1) to be true?

Here's a natural first idea. (1) is true as long as a relevant writing event takes place at some point in the future. This yields the standard analysis of 'will' in tense logic: